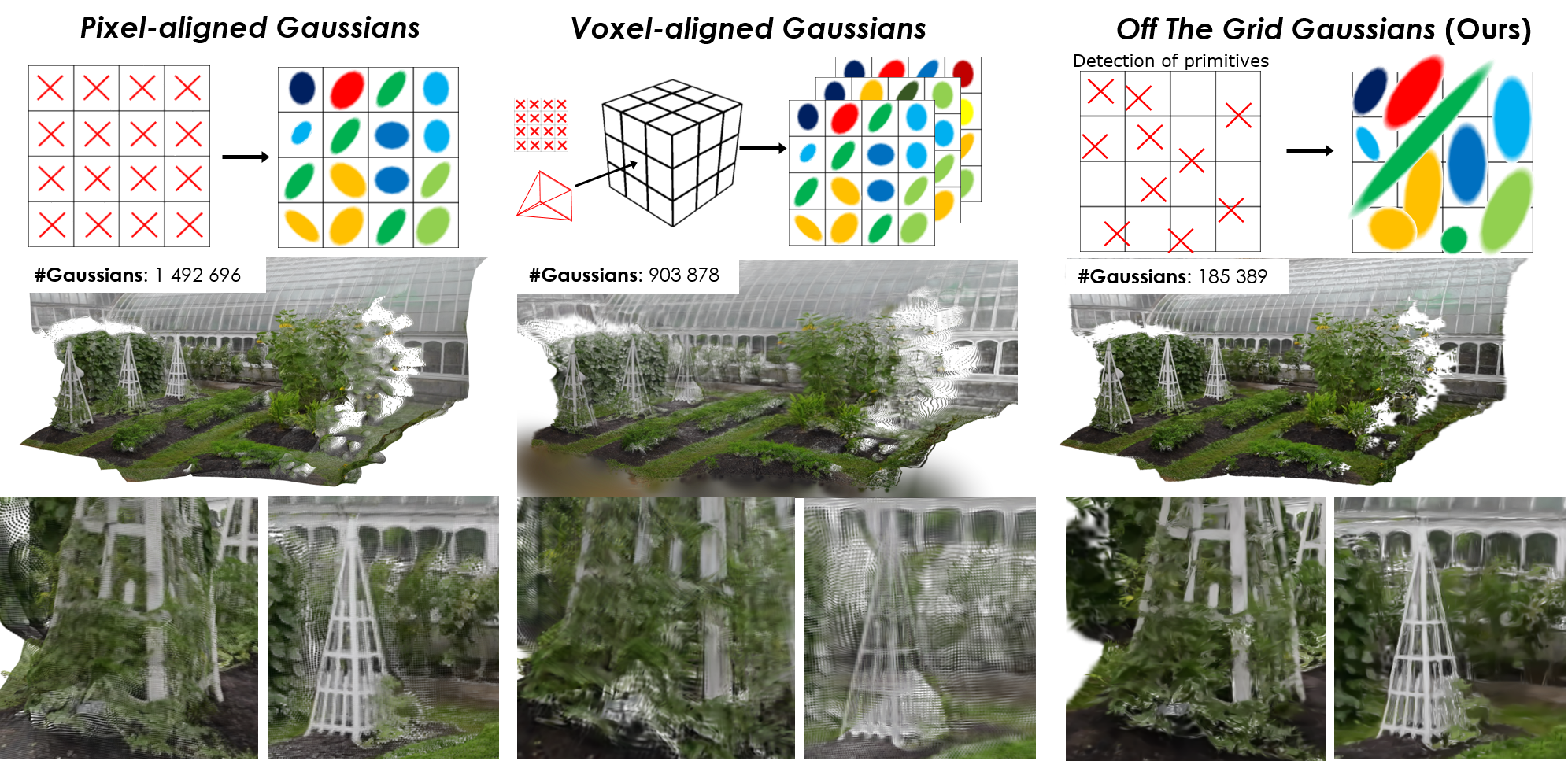

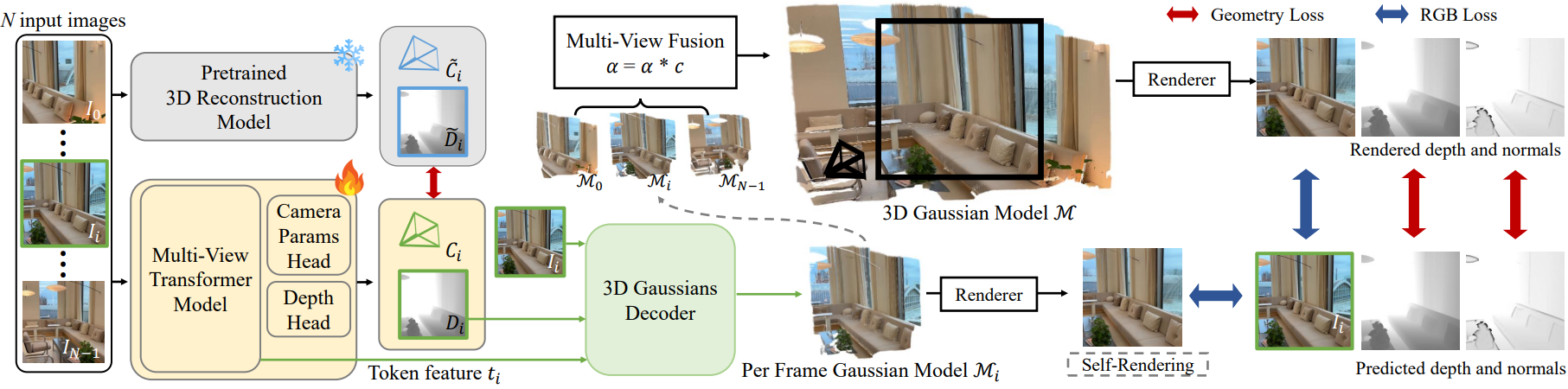

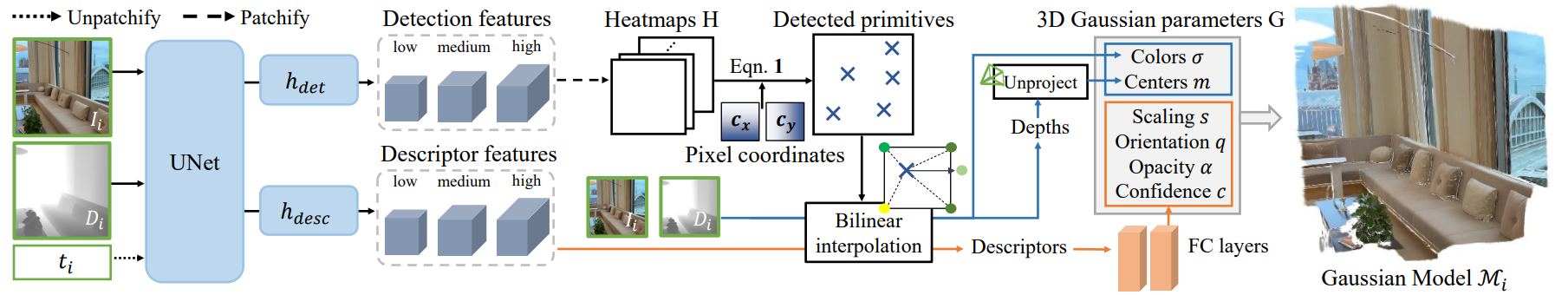

Feed-forward 3D Gaussian Splatting (3DGS) models enable real-time scene generation but are hindered by suboptimal pixel-aligned primitive placement, which relies on a dense, rigid grid that limits both quality and efficiency. We introduce a new feed-forward architecture that detects 3D Gaussian primitives at a sub-pixel level, replacing the pixel grid with an adaptive, ``Off-The-Grid" distribution. Inspired by keypoint detection, our decoder learns to locally distribute primitives across image patches. We also provide an Adaptive Density mechanism by assigning varying number of primitives per patch based on Shannon entropy. We combine the proposed decoder with a pre-trained 3D reconstruction backbone and train them end-to-end using photometric supervision without any 3D annotation. The resulting pose-free model generates photorealistic 3DGS scenes in seconds, achieving state-of-the-art novel view synthesis for feed-forward models. It outperforms competitors while using far fewer primitives, demonstrating a more accurate and efficient allocation that captures fine details and reduces artifacts.

We fine-tune VGGT to predict and render 3D Gaussians in a self-supervised loop that does not require 3D annotations for training.

Our 3D Gaussians Decoder learns to detect and describe 3D primitives from 2D images, depths and latent features.

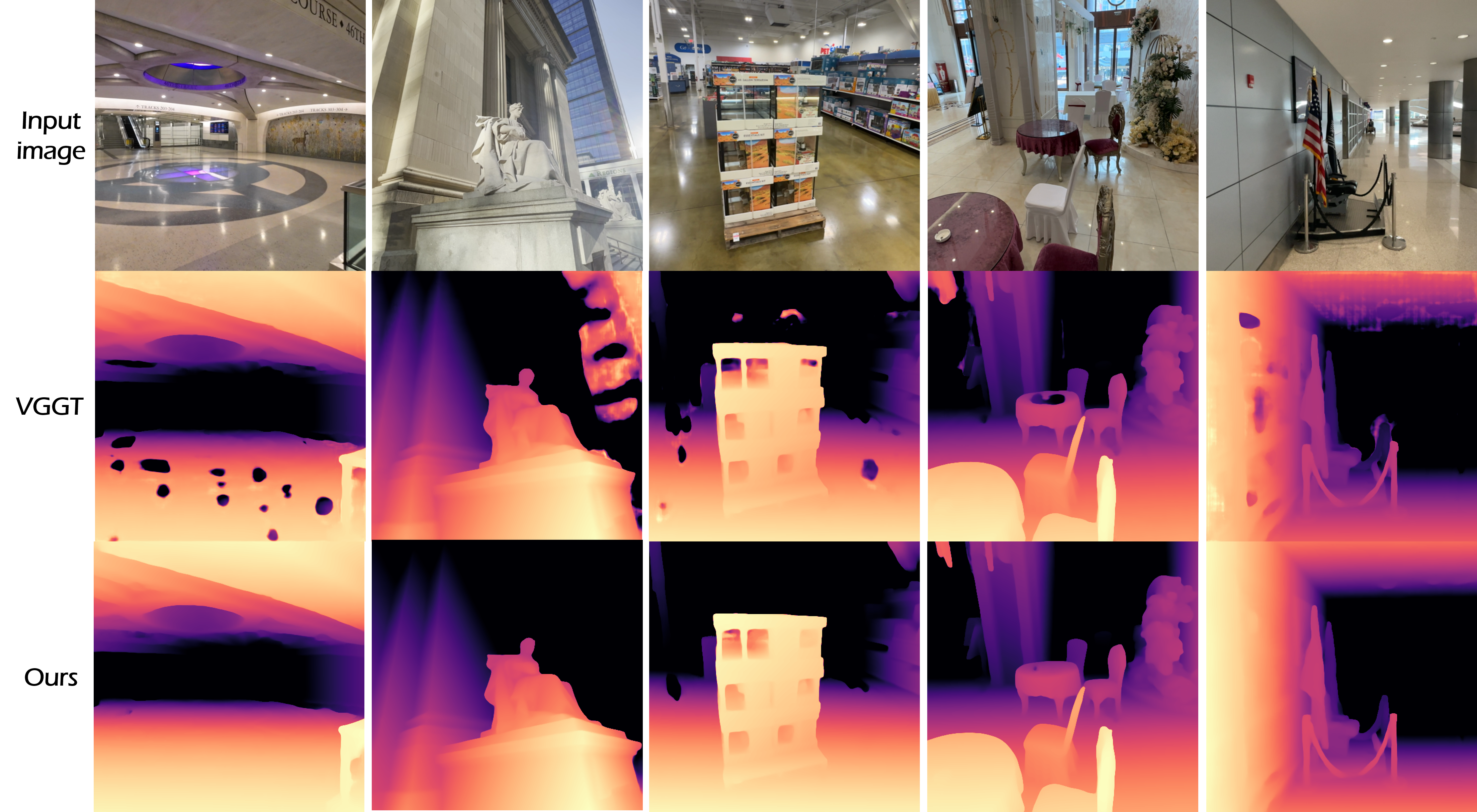

Fine-tuning VGGT for rendering with our method enables to avoid depth estimation failure cases in specular or sky areas.

We also observe that our fine-tuning improves camera pose and focal length estimations, check the paper for more details !

@inproceedings{moreau2023human,

title={Off The Grid: Detection of Primitives for Feed-Forward 3D Gaussian Splatting},

author={Moreau, Arthur and Shaw, Richard and Nazarczuk, Michal and Shin, Jisu and Tanay, Thomas and Zhang, Zhensong, and Xu, Songcen and P{\'e}rez-Pellitero, Eduardo},

booktitle={CVPR},

year={2026}

}